How Physics-Based AI Animation Boosts Video Quality

Physics-based AI animation is changing how videos are made by applying physical laws like gravity and momentum to create realistic motion. Unlike older AI systems that relied on visual patterns, these models simulate object behavior and material properties, reducing errors like glitches and unnatural movements. The result is smoother transitions, fewer visual distortions, and more believable scenes.

Key takeaways:

- Realistic motion: Models calculate movement using physical principles, avoiding mistakes like "foot sliding."

- Fewer errors: Anchoring textures to 3D geometry minimizes glitches like flickering or teleporting objects.

- Better flow: Smooth motion between frames is achieved by applying Newtonian physics.

This approach saves time for creators by reducing post-production fixes and enabling complex sequences like sports scenes or intricate animations. Tools like PhysAnimator and MotionCraft demonstrate the practical benefits, offering improved video quality and easier workflows.

AI-Driven, Physics-Based Character Animation

sbb-itb-94859ad

How Physics-Based AI Animation Improves Video Quality

Physics-based AI animation brings three major upgrades compared to older pixel-prediction methods: motion that feels grounded in real-world physics, fewer visual errors like glitches or distortions, and smoother transitions between frames. Together, these improvements tackle the key issues that often make AI-generated videos seem unnatural or unconvincing.

Realistic Motion Through Physical Laws

Instead of just guessing the next frame based on patterns, physics-based systems rely on actual physical principles to calculate motion. For example, models like Kling O1 use logical steps to determine outcomes - like how gravity accelerates an object at 32 feet per second squared or how momentum is conserved during a collision - before they even generate a single pixel.

In March 2026, researchers from Washington University in St. Louis and NVIDIA introduced the PhysAlign framework, which teaches models rigid-body kinematics through a synthetic data pipeline. Using just 3,000 synthetic video clips created in Blender, the model learned concepts like gravitational pull and momentum-driven trajectories. This means that when a glass falls in a scene, the system calculates whether it should shatter based on factors like its material and the speed of impact - just like in real life. This attention to detail not only makes the motion more believable but also simplifies production.

A key improvement comes from specialized solvers that address common animation errors, such as "foot sliding." These solvers detect where feet make contact with the ground, lock their positions, and calculate realistic ground reaction forces to ensure natural movement.

Fewer Visual Artifacts and Distortions

Older AI video tools often struggle with issues like objects "teleporting" or textures flickering. Physics-based methods solve these problems by anchoring textures to the 3D geometry of the model.

Take the PhysVid framework, developed by Eindhoven University of Technology researchers in March 2026. It uses physics-based annotations and "negative physics prompts" to guide models away from unrealistic movements. The result? A 33% improvement in physical commonsense scores on the VideoPhy benchmark. Additionally, physics engines enforce anatomical and joint constraints, ensuring that skeletal movements stay within realistic limits. This avoids bizarre outcomes like limbs bending unnaturally or objects disappearing to "cheat" motion calculations.

By respecting principles like joint angle limits and mass conservation, these systems eliminate many of the visual errors that break immersion in traditional AI-generated videos.

Better Temporal Consistency

One of the standout benefits of physics-based AI is its ability to maintain logical motion between frames. By using optical flow to estimate velocity, these models apply Newtonian principles like constant acceleration, avoiding the erratic "drifting" seen in older methods.

In November 2025, researchers from Stony Brook University and CNRS unveiled NewtonRewards, a post-training framework that fixes kinematic errors in real time. When tested on the NewtonBench-60K dataset, the system successfully ensured smooth, constant-acceleration paths in scenarios like parabolic throws.

These models also process video in connected "chunks", using attention mechanisms that focus on local physics rather than relying on broad, generalized prompts. This approach prevents abrupt, unrealistic changes - like objects suddenly speeding up or changing direction without reason. The PhysVid team demonstrated an 8% improvement in physical commonsense scores on the VideoPhy2 benchmark compared to larger, less physics-aware models. This highlights that understanding physics is more important than just increasing model size when it comes to maintaining smooth, believable motion.

These advancements lay the groundwork for the research findings and viewer engagement insights covered in the next sections.

Research and Case Studies

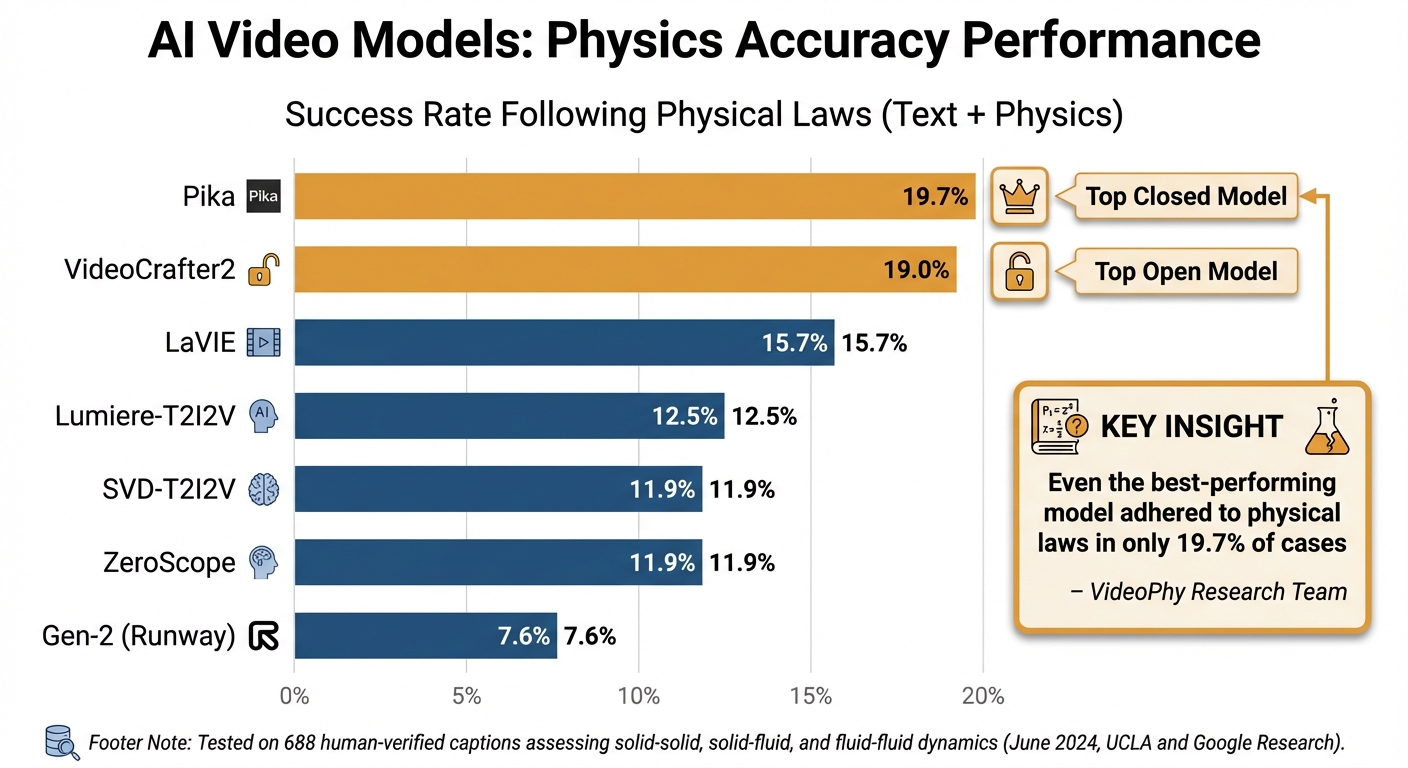

AI Video Model Physics Accuracy Benchmark Comparison

Recent case studies highlight how grounding AI in physical laws can significantly improve video quality by minimizing artifacts and ensuring smoother motion. Let’s dive into some practical applications of these physics-based methods.

PhysAnimator: Realistic Anime Motion

In January 2025, researchers from UCLA and Netflix introduced PhysAnimator, a framework designed to transform static anime illustrations into lifelike animations. The team, led by Tianyi Xie and Yiwei Zhao, utilized the Segment Anything Model (SAM) to segment characters, converted them into 2D triangulated meshes, and applied motion equations using the semi-implicit Euler method.

PhysAnimator employs deformable body simulations to recreate natural movements like hair swaying and clothing fluttering. A standout feature is its "energy strokes", which let creators apply forces - such as wind or attraction - to specific areas, offering precise control over the animation's physics.

"PhysAnimator integrates physics-based animation with data-driven generative models to synthesize visually compelling dynamic animations." - Tianyi Xie et al., PhysAnimator

The system also addresses a common problem in animation: "black hole" artifacts. These occur when moving objects reveal previously hidden areas. To solve this, PhysAnimator warps a texture-agnostic sketch before using a sketch-guided video diffusion model to fill in the final, colorized frames. This method avoids distortions caused by directly warping raw images.

MotionCraft: Physics-Driven Zero-Shot Video Generation

In May 2024, with a revision in October 2024, researchers from Politecnico di Torino, led by Luca Savant Aira, introduced MotionCraft. This framework uses physics-based optical flow to warp the noise latent space of diffusion models instead of manipulating pixels directly.

This approach allows the model to "hallucinate" missing elements, ensuring seamless scene evolution. When objects move and expose new areas, the model generates these regions naturally, avoiding gaps or visual inconsistencies. MotionCraft has been shown to outperform Text2Video-Zero in handling intricate motion dynamics.

"Warping the noise latent space results in coherent application of the desired motion while allowing the model to generate missing elements consistent with the scene evolution, which would otherwise result in artefacts." - Luca Savant Aira et al., MotionCraft

Performance Benchmarks

In June 2024, UCLA and Google Research developed the VideoPhy benchmark to evaluate how well AI video models follow physical laws. Led by Hritik Bansal, the team tested nine models using 688 human-verified captions that assessed interactions like solid-solid, solid-fluid, and fluid-fluid dynamics.

The findings were striking: even the best-performing model adhered to physical laws in only 19.7% of cases. Pika, the top closed model, achieved this modest success rate, while VideoCrafter2, the leading open model, followed at 19%. Other models, including Runway's Gen-2, struggled even more, with Gen-2 scoring just 7.6%.

| Model | Success Rate (Text + Physics) |

|---|---|

| Pika | 19.7% |

| VideoCrafter2 | 19.0% |

| LaVIE | 15.7% |

| Lumiere-T2I2V | 12.5% |

| SVD-T2I2V | 11.9% |

| ZeroScope | 11.9% |

| Gen-2 (Runway) | 7.6% |

"The best performing model, Pika, generates videos that adhere to the caption and physical laws for only 19.7% of the instances." - VideoPhy Research Team

These results emphasize the importance of integrating physical constraints into AI video models. By doing so, creators can achieve higher visual accuracy and smoother production workflows, all while delivering more engaging content.

Impact on Viewer Engagement and Content Creation

Higher Viewer Engagement Through Realistic Motion

People are surprisingly good at noticing when something in a video doesn’t quite look right, even if they can’t explain why. Research from Physion Labs found that viewers pick up on physical glitches in 25%–37% of exocentric videos and a striking 48%–62% of egocentric videos. These errors - like objects teleporting, morphing, or defying the basic laws of motion - can lead to disengagement. It’s this subconscious sensitivity to physical accuracy that makes videos feel more believable when they follow real-world physics. Despite advancements, glitches persist, with 83.3% of exocentric and 93.5% of egocentric AI-generated videos still showing at least one noticeable error.

"Viewers subconsciously detect physics violations, making physically accurate videos feel more real even when the difference is hard to articulate." - Alexis, AI Engineer, Bonega.ai

Production Efficiency for Content Creators

Physics-based AI doesn’t just improve viewer engagement - it’s also a game-changer for content creators. By automating the process of rendering realistic motion, this technology slashes post-production time. Instead of spending hours fixing common issues like floating objects, teleportation, or unnatural deformations, creators can focus their energy on storytelling and refining their narrative. Advanced AI architectures now track object identity across frames, reducing errors and minimizing the need for manual corrections.

Platforms like LongStories.ai are taking these innovations further. They enable YouTube creators to produce high-quality, long-form videos up to 10 minutes with ease. By offering tools to create reusable "Universes" - complete with consistent characters, styles, and voices - creators can maintain their brand identity while sidestepping traditional time-consuming tasks like animation, voiceover recording, and editing. With options like No Animation, Fast Animation, and Pro Animation tiers, along with bulk editing features, creators can meet the frequent posting demands of platform algorithms while building a steady income stream. For videos longer than 30 seconds, video extension techniques are essential, but ensuring physical continuity at boundaries is critical, as physics accuracy tends to degrade with extended durations. These tools not only simplify production but also improve video quality, reinforcing the benefits of physics-based AI in content creation.

Conclusion

Physics-based AI animation is reshaping the way video content is created. Instead of relying solely on pixel prediction, these systems use world models to simulate physical properties like object positions, velocities, and materials. This approach ensures videos adhere to the laws of motion right from the start. The result? Fewer glitches, smoother transitions, and motion that feels more natural to viewers.

But it’s not just about better visuals. Content creators are saving time by avoiding tedious post-production fixes. Complex interactions, like objects colliding or falling realistically, are rendered correctly in the first pass. Alexis, an AI Engineer at Bonega.ai, highlights this shift:

"Scenes that previously required careful editing to correct physical impossibilities now generate correctly the first time".

Platforms like LongStories.ai are taking these advancements further, empowering YouTube creators and digital storytellers to produce high-quality videos up to 10 minutes long. By combining realistic physics with reusable character models and efficient workflows, these tools are solving long-standing challenges around quality and production speed.

However, there are still hurdles. Physics accuracy tends to degrade after 30 seconds, and managing large numbers of interacting objects remains tricky. Even so, the direction is clear: video production is evolving toward systems that understand physical principles, not just visual patterns. This shift is unlocking possibilities for creating dynamic action scenes, sports content, and intricate simulations that were previously too expensive or time-intensive. As these models improve, the gap between independent creators and large studios will continue to shrink, making high-quality video production accessible to more creators than ever before.

FAQs

How does physics-based AI reduce video glitches?

Physics-based AI improves video quality by incorporating the principles of physical laws into animations. By doing this, it ensures that objects behave and interact in ways that feel natural - avoiding odd glitches like floating objects or movements that defy logic. The outcome? Smoother, more realistic visuals that make the viewing experience more immersive and enjoyable.

What problems does it solve vs. pixel-based video AI?

Physics-based AI animation addresses challenges often seen in pixel-based video AI, including jerky motion, unnatural object behavior, and identity inconsistencies. By incorporating principles like gravity and momentum, it ensures animations and interactions feel grounded in reality. This approach not only elevates video quality but also deepens viewer engagement and minimizes the need for manual adjustments. It's especially useful for storytelling and maintaining consistency in long-form video projects.

Why does physics accuracy drop after 30 seconds?

When AI models are tasked with simulating physics over extended periods, their accuracy tends to drop after about 30 seconds. This happens because the models struggle to consistently apply physical laws over longer sequences. As a result, you might notice problems like awkward or unnatural movement paths, warped body shapes, or inconsistent interactions between objects.

Related posts

LongStories is constantly evolving as it finds its product-market fit. Features, pricing, and offerings are continuously being refined and updated. The information in this blog post reflects our understanding at the time of writing. Please always check LongStories.ai for the latest information about our products, features, and pricing, or contact us directly for the most current details.