AI Voice Cloning in Animation: Legal Risks

AI voice cloning is changing how animation and entertainment projects are made, but it brings legal risks you need to know about. Unlike traditional voice work, AI voice cloning involves laws like Right of Publicity and biometric privacy, treating voices as part of personal identity. Here's what you should keep in mind:

- Key Laws: Regulations like Illinois' Biometric Information Privacy Act (BIPA), Tennessee's ELVIS Act, and the new AI Transparency and Voice Rights Act (2026) require consent and clear disclosures for AI-generated voices.

- Recent Legal Case: In 2025, voice actors sued Lovo, Inc., for allegedly misusing recordings to create voice clones, raising questions about contracts and personal rights.

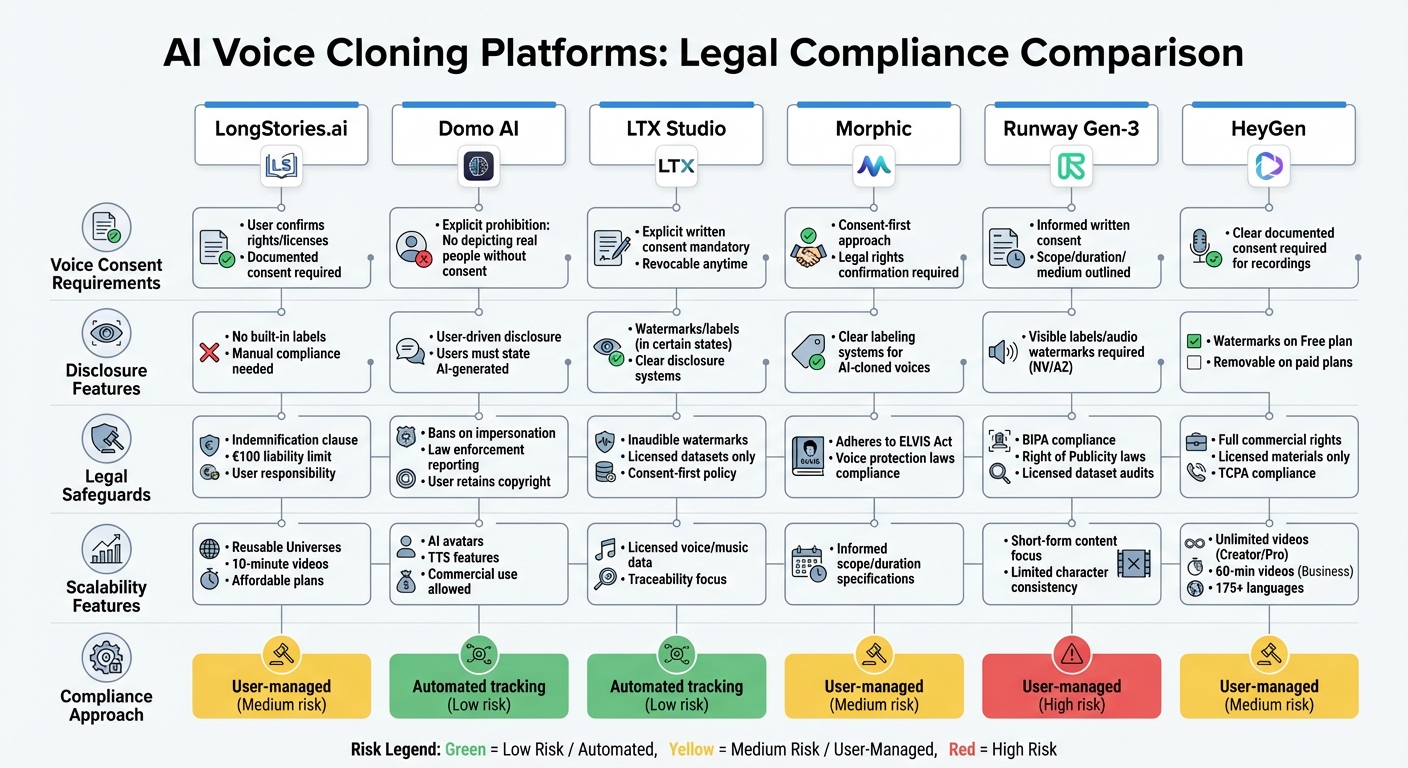

- Platform Policies: Tools like LongStories.ai, Domo AI, LTX Studio, Morphic, Runway Gen-3, and HeyGen have different approaches to consent, disclosure, and liability. Some rely on user responsibility, while others enforce stricter safeguards.

Creators must balance efficiency with compliance. Using clear agreements and transparent labeling is critical to avoid fines and maintain trust. Federal and state laws are tightening, so understanding these rules is now essential for anyone working with AI voice cloning.

1. LongStories.ai

Legal Safeguards

LongStories.ai places a strong emphasis on users securing the appropriate rights for voice cloning. According to its Terms of Service, users must confirm they have all the necessary rights, licenses, consents, and permissions for any audio or text they upload. This includes obtaining documented consent from voice actors before using their audio samples - a step that aligns with legal requirements like Tennessee's ELVIS Act. The platform also includes an indemnification clause, meaning users are responsible for defending and covering LongStories.ai against any legal claims tied to their inputs or the AI-generated output. Furthermore, users are expected to review the generated content to avoid issues like intellectual property violations or defamation. The platform limits its liability to €100 (around $107) or the amount paid over the past 12 months, whichever is higher. These rules aim to mitigate legal risks tied to AI voice cloning. Additionally, the platform provides content accuracy disclaimers as part of its legal framework.

Disclosure Features

The Terms of Service caution users that AI-generated outputs could contain errors or raise legal concerns. However, LongStories.ai does not currently require built-in disclosure labels for AI-generated voices. With the U.S. AI Transparency and Voice Rights Act set to take effect in early 2026, which will mandate clear disclosures for AI-generated voices in commercial settings, creators will need to manually ensure compliance. This is also necessary to align with the EU AI Act standards.

Scalability for Creators

On top of addressing legal concerns, LongStories.ai boosts efficiency for content creators. The platform allows users to create reusable "Universes" with consistent characters, voices, and styles, reducing common voiceover production delays. It supports long-form video production up to 10 minutes, aligning with YouTube’s monetization criteria. With affordable subscription plans and a free trial, LongStories.ai provides a budget-friendly alternative to traditional voiceover work, which often requires significant financial and time investments. This streamlined approach offers creators a practical solution compared to the higher costs and longer timelines of traditional voiceover methods.

sbb-itb-94859ad

2. Domo AI

Voice Consent Policies

Domo AI has clear rules against creating content that involves real people without their permission. As outlined in the platform's Terms of Service, "You may not create or attempt to create content that... Depicts real people without their consent [or] Impersonates real people or falsely portrays individuals in misleading or defamatory ways". This policy also applies to AI avatars and Text-to-Speech (TTS) features. According to the Generative AI Usage Policy, "Impersonation – Misrepresenting any individual or entity using AI avatars or the TTS feature is strictly forbidden". Users are required to confirm ownership or obtain proper permissions before uploading any related content.

Disclosure Features

Domo AI takes a user-driven approach to transparency. Unlike platforms with automated disclosure systems, Domo AI asks users to openly state when content is AI-generated before sharing it. The policy specifically mentions, "If you incorporate AI images into your DomoAI designs, we ask that you disclose that they were created using AI". This approach relies on user cooperation and complements the platform’s broader legal measures.

Legal Safeguards

To prevent misuse, Domo AI enforces strict restrictions on its AI tools. The platform bans the use of its tools for spreading false information, creating misleading portrayals of public figures, or producing harmful content, including material that is sexually explicit or harmful to minors. AI avatars and TTS features are also prohibited from discussing sensitive topics such as religion, politics, race, gender, or sexuality. If unlawful content is detected, Domo AI may report it to law enforcement.

Additionally, users retain the copyright for any content they generate and can use it commercially, as long as it adheres to all applicable laws and respects the rights of third parties. These measures aim to support responsible use of AI voice cloning while addressing emerging legal challenges.

3. LTX Studio

Voice Consent Policies

At LTX Studio, explicit written consent is a must for voice cloning and training AI models. Creators need to secure clear authorization from performers, ensuring the consent outlines the intended use of the voice. Importantly, this consent can be revoked at any time.

Disclosure Features

In certain states, laws now require labels or watermarks on AI-generated content. For example, the AI Transparency and Voice Rights Act mandates that any commercial use of AI-generated voices - like in animation or advertising - includes clear disclosure. To meet these requirements, platforms are adopting systems that notify audiences when they’re hearing an AI-generated voice instead of a traditional recording. These efforts align LTX Studio with current regulations and enhance its commitment to transparency.

Legal Safeguards

LTX Studio follows a strict "consent-first" policy, requiring explicit confirmation of rights before any voice data is uploaded or recorded. All voice and music data are obtained through approved licensing channels. To bolster security, the studio uses inaudible watermarks, ensuring traceability and protecting against copyright infringement. These practices help creators meet legal requirements across different regions while safeguarding their work.

4. Morphic

Voice Consent Policies

Morphic follows a strict consent-first approach, requiring creators to confirm they have legal rights to any voice they upload. This ensures that unauthorized voice cloning is effectively blocked. Additionally, the platform guarantees that voice actors are fully informed about how their voices will be used, including the scope and duration of AI applications. These measures reflect the platform's commitment to clear legal and ethical practices.

Disclosure Features

In line with the U.S. AI Transparency and Voice Rights Act, Morphic is obligated to disclose when AI-generated voices are used in commercial animations or advertisements. To meet this requirement, the platform uses clear labeling systems that inform audiences whenever an AI-cloned voice is present.

Legal Safeguards

Morphic strengthens its consent and disclosure policies with solid legal protections. The platform adheres to various voice protection laws, including Tennessee's ELVIS Act. This law criminalizes unauthorized digital replication of someone's voice and provides legal avenues for those affected by such violations. These safeguards reinforce Morphic's dedication to ethical AI practices.

5. Runway Gen-3

Voice Consent Policies

Runway Gen-3 requires clear, explicit voice consent to navigate a complicated legal landscape. The platform operates under state-level Right of Publicity laws, such as California's Civil Code §3344 and New York's Sections 50 and 51 of the Civil Rights Law, which prohibit the unauthorized commercial use of someone's voice. In Illinois, voiceprints are classified as biometric identifiers under the Biometric Information Privacy Act (BIPA), meaning unauthorized replication could result in hefty civil penalties. Tennessee's ELVIS Act further emphasizes the need for consent in these scenarios.

"The ELVIS Act is already influencing contractual norms, particularly for performers, voice artists, and influencers whose digital likeness or vocal style may be used in derivative works or automated production pipelines" - Jay Kotzker, Holon Law Partners

Creators working with Runway Gen-3 must ensure they have informed, written consent that clearly outlines the scope, duration, and medium of use for any voice cloning projects.

Disclosure Features

The AI Transparency and Voice Rights Act, enacted in early 2026, compels creators to disclose the use of AI-generated voices in commercial settings like animation or advertising. Nevada and Arizona have implemented even stricter transparency laws, requiring visible labels or audio watermarks for AI-generated voices in commercial entertainment. Additionally, the Federal Trade Commission considers the use of cloned voices in advertising without proper disclosure to be deceptive to consumers. These rules add another layer of legal complexity for creators to manage.

Legal Safeguards

Runway Gen-3, like other platforms, faces enforcement challenges from major distributors. Under Section 43(a) of the Lanham Act, using a recognizable voice clone in animated commercials could lead to claims of false endorsement. Platforms such as YouTube, Meta, and TikTok now mandate disclosure of AI-generated content and offer tools for removing unauthorized voice likenesses. To reduce the risk of derivative work infringement claims, creators are advised to audit training data and ensure it comes from licensed datasets, especially for commercial animation projects.

Is AI Voice Cloning Legal? Intellectual Property & Likeness Rights

6. HeyGen

HeyGen combines creative tools with a focus on legal compliance, much like other platforms in this space.

Voice Consent Policies

HeyGen allows users to create "Custom Avatars" by uploading their voice recordings, which are then used to generate digital avatars. To stay compliant with legal standards, the platform requires clear and documented consent from performers before their voices are used for AI cloning. This approach aligns with state laws that classify voiceprints as biometric identifiers, such as in California and Illinois, where explicit consent is mandatory. The varying laws across states add complexity to ensuring compliance for nationwide use.

Disclosure Features

In line with the AI Transparency and Voice Rights Act, HeyGen embeds watermarks on videos created with its Free plan. These watermarks can be removed on paid plans. Users, however, are responsible for managing consent across different jurisdictions and keeping track of voice dataset attributions to ensure compliance.

Legal Safeguards

HeyGen provides users with full commercial rights to the content they create, while requiring that only licensed materials are used to train its AI systems. This helps mitigate potential copyright issues. Additionally, under the FCC's TCPA regulations, AI-generated voice calls require prior written consent, with violations carrying fines of $1,500 per instance. These safeguards align HeyGen with industry standards and help protect both the platform and its users.

Scalability for Creators

HeyGen isn't just about compliance - it also supports creators in scaling their content efforts. The platform offers unlimited video generation on its Creator and Pro plans, while the Business plan allows for videos up to 60 minutes long and includes team collaboration features. With support for voice cloning and video translation in over 175 languages and dialects and access to 300+ voices, HeyGen is designed to meet diverse content needs. However, creators must navigate compliance requirements, such as mandatory watermarking and labeling for synthetic media in states like Nevada and Arizona, while leveraging these tools to expand their reach.

Platform Comparison: Advantages and Disadvantages

AI Voice Cloning Platform Comparison: Legal Features and Compliance

Building on the earlier analysis of legal frameworks, each platform offers a unique mix of compliance and production efficiency. LongStories.ai stands out for its focus on scalability, using reusable "Universes" to maintain consistent voices across videos up to 10 minutes long. However, creators must individually secure voice consent while navigating state-specific laws, such as those requiring explicit written consent for voiceprint replication.

Domo AI and LTX Studio take a more technical approach to compliance. Domo AI uses inaudible fingerprints to track authorship and prevent unauthorized use, aligning with transparency laws in states like Nevada and Arizona . LTX Studio employs Explainable Inference to trace vocal segments back to their licensed datasets. Both platforms require users to confirm they have the legal rights to upload voice data. These features highlight their focus on balancing production needs with legal accountability.

Morphic adopts a user-driven compliance model, requiring creators to ensure all necessary consents are in place. This approach shifts the legal responsibility to users, offering the platform some protection. However, navigating state-specific laws, such as Tennessee's ELVIS Act and California Civil Code §3344, becomes the creator's responsibility .

Runway Gen-3 and HeyGen offer fewer legal protections, particularly for projects needing consistent voice continuity. Runway Gen-3 focuses on short-form content and lacks the tools for maintaining character consistency, making it less ideal for long-term animation projects. HeyGen, with its avatar-based approach and commercial rights offerings, may not meet the needs of creators seeking persistent, consistent character voices. These limitations push creators to weigh ease of use against regulatory risks.

This comparison underscores the trade-off between automated compliance tools and creative flexibility. Platforms like Domo AI and LTX Studio, with features like watermarking and attribution tracking, streamline compliance but may restrict creative freedom. On the other hand, platforms like LongStories.ai and HeyGen prioritize production flexibility, leaving creators to manage complex legal requirements on their own. Laws like Tennessee's ELVIS Act set a national precedent, forcing creators to navigate an increasingly intricate legal landscape. Choosing the right platform ultimately depends on whether the priority lies in streamlined production or enhanced legal safeguards.

Conclusion

Between 2024 and 2026, AI voice cloning transitioned from minimal oversight to being tightly regulated under laws like Illinois' BIPA and Tennessee's ELVIS Act. The federal NO FAKES Act now holds platforms accountable for hosting unauthorized voice clones, imposing penalties starting at $5,000 per violation and escalating significantly if actual damages are proven.

Choosing the right platform requires balancing flexibility with legal protection. Options like LongStories.ai focus on scalability, while others, such as Domo AI and LTX Studio, prioritize traceability. Meanwhile, platforms like Morphic, Runway Gen-3, and HeyGen often leave compliance responsibilities to users. These differences highlight the importance of having solid legal agreements in place.

Attorney Jay Kotzker of Holon Law Partners explains the current landscape:

"The industry is entering an era where right-of-publicity, AI ethics, and content licensing converge. Proactive contracting is now essential to avoiding disputes and safeguarding creative relationships"

Creators should use Voice Model Release Forms to explicitly grant permissions for AI training and synthesis. Many older recording contracts, drafted before 2024, lack provisions for these rights. Legacy agreements need updates to address whether performances can be modified using AI, localized, or used to train internal models.

Transparent labeling, such as marking outputs as "AI-Generated", helps prevent fraud and data laundering risks. Deepak Gupta, CEO and cofounder of Kveeky, underscores this necessity:

"Ignorance is no longer a defense; it's a liability"

Ethical practices also safeguard relationships with talent and maintain consumer trust. These steps are critical for adapting to upcoming federal regulations, like the proposed Impersonation Rule. Platforms with strong attribution tracking and watermarking tools ensure that every voice clip is tied to a licensed dataset. As the FTC's Office of Technology cautions:

"the risks involved with voice cloning and other AI technology cannot be addressed by technology alone"

For animators and creators, these measures are vital to protecting creative assets and adhering to ethical standards in AI-driven character animation.

FAQs

Do I always need written consent to clone a voice?

In most situations, written consent is necessary to legally clone someone's voice, especially for commercial purposes. This step helps protect against legal troubles like unauthorized use or violating a person's publicity rights. It also promotes ethical practices when working with AI voice cloning technology.

What disclosures are required for AI-generated voices in the U.S.?

In the United States, regulations around AI-generated voices focus on transparency. This is especially critical when AI voice cloning is used without someone's consent, in deceptive ways, or to mislead others. Legal guidelines stress the need to obtain clear consent and give proper credit to ensure ethical practices.

What should a voice model release form include?

A voice model release form needs to clearly outline consent from the individual for the use of their voice. It should detail the scope and purpose of its use, ensuring there’s no ambiguity about how the voice will be utilized. Additionally, the form should specify any rights and restrictions related to the recording, covering areas like distribution or modification.

To protect all involved parties, the release should also include terms for revocation - explaining under what conditions consent can be withdrawn - and liability protections. This ensures everyone understands their responsibilities and rights, minimizing potential disputes.

Related posts

LongStories is constantly evolving as it finds its product-market fit. Features, pricing, and offerings are continuously being refined and updated. The information in this blog post reflects our understanding at the time of writing. Please always check LongStories.ai for the latest information about our products, features, and pricing, or contact us directly for the most current details.