Deepfake Detection: How YouTube Protects Creators

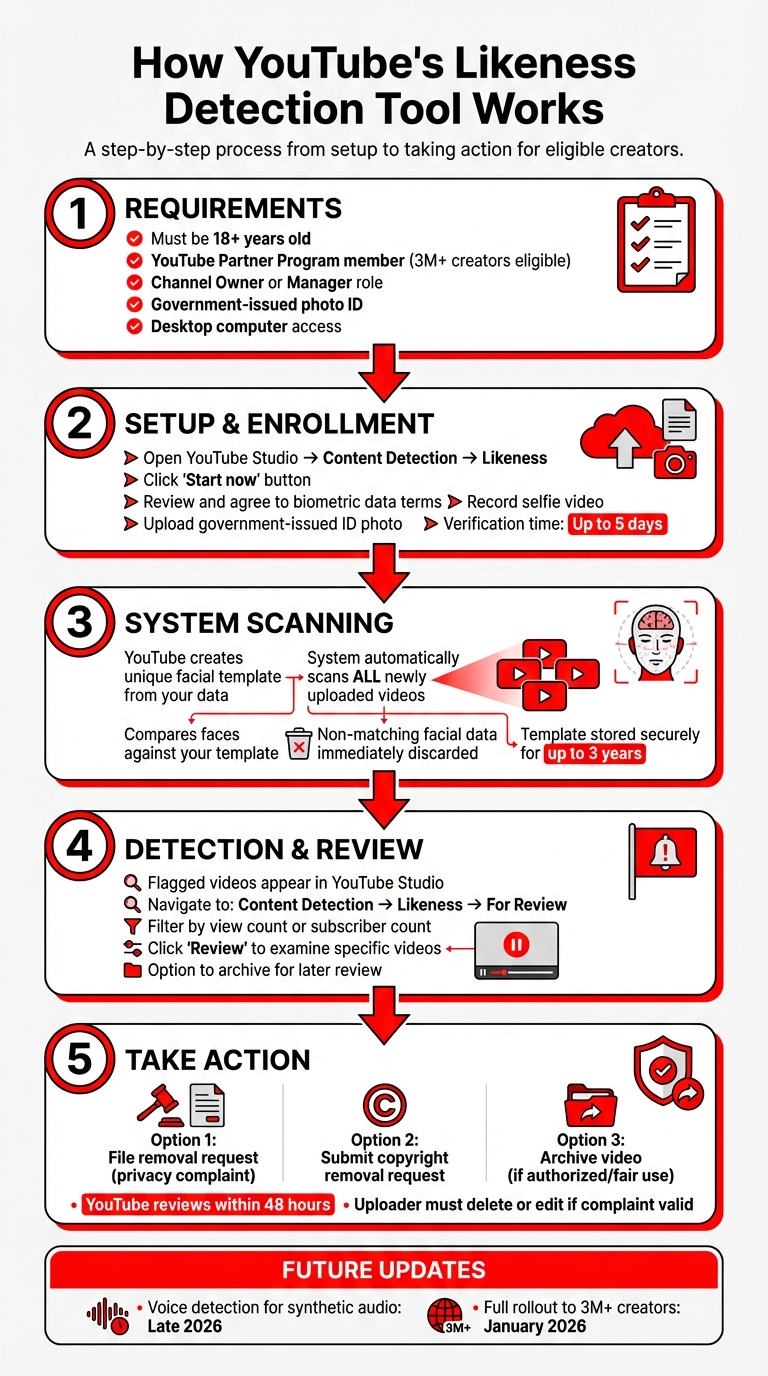

Deepfakes are becoming a growing problem for YouTube creators, misusing their faces or voices in AI-generated videos to harm reputations, promote scams, or spread false information. In response, YouTube has introduced tools like the Likeness Detection Tool to safeguard creators. This system scans uploaded videos for unauthorized appearances of enrolled creators' faces, allowing quick action on flagged content. By January 2026, over 3 million creators in the YouTube Partner Program gained access to this feature.

Key highlights:

- Likeness Detection Tool: Scans videos for facial matches using a creator's biometric data (ID and selfie).

- How It Works: Flagged videos appear in YouTube Studio for review and potential removal requests.

- Future Updates: Voice detection for synthetic audio is expected by late 2026.

- Privacy Measures: Biometric data is securely stored and not used for AI training.

These efforts, combined with mandatory AI content disclosures and advanced moderation, aim to protect creators' identities and maintain trust on the platform.

Sneak Peek: Likeness Detection

sbb-itb-94859ad

YouTube's Likeness Detection Tool: What It Does

YouTube's Likeness Detection Tool is designed to scan newly uploaded videos for unauthorized appearances of your face. It works by creating a digital facial template based on a video selfie and a government-issued ID that you provide during setup. Once you enroll, the tool automatically compares your unique facial template to faces in videos uploaded across the platform.

Every new video is scanned, and if the system finds a potential match, it flags the content in the "Likeness" tab within your YouTube Studio's Content detection menu. This allows you to review flagged videos and decide whether to request their removal. Think of it as a version of Content ID, but specifically for facial recognition.

Currently, the tool focuses on detecting visual matches of faces. Voice detection for synthetic or altered audio is expected to roll out by late 2026. YouTube also plans to make this tool available to over 3 million creators in the YouTube Partner Program by January 2026.

How Likeness Detection Protects Creators

This tool serves as a safeguard for your digital identity and reputation. When deepfakes misuse your face - whether to advertise fake products, spread false information, or harm your credibility - the system flags such videos, allowing you to act quickly.

The feature is entirely optional and operates only with your consent. Jack Malon, a YouTube spokesperson, clarified, "Likeness detection is a completely optional feature, but does require a visual reference to work". You have full control over whether to enable it, and if you decide to opt out, YouTube will stop processing your data within 24 hours. This level of control aligns with YouTube's broader strategy to combat deepfake misuse.

The Technology Behind Likeness Detection

The tool uses advanced machine learning models to identify facial features based on your biometric reference data. During enrollment, you submit a government-issued ID and record a short video selfie. YouTube then creates a face template - a digital representation of your facial features. This template is key to identifying unauthorized uses of your likeness. The verification process may take up to five days.

Once your template is active, YouTube's system scans all faces in newly uploaded videos. To ensure privacy, the system immediately discards facial data that does not match an enrolled creator. Your face template, along with your legal name and ID information, is stored securely for up to three years from your last sign-in date, unless you choose to withdraw your consent earlier.

The tool was initially tested in December 2024 with talent represented by the Creative Artists Agency (CAA) before being expanded to millions of creators. While the system is highly accurate, occasional false flags may occur and require manual review.

How to Set Up Likeness Detection

How YouTube's Likeness Detection Tool Works: Setup to Action

Requirements and Setup Steps

To enable Likeness Detection, you need to meet a few basic criteria. First, you must be at least 18 years old and part of the YouTube Partner Program, which includes over 3 million creators. Only Channel Owners or designated Managers can complete the setup process. Editors will need to request assistance from someone with higher permissions.

You’ll also need a valid government-issued photo ID and access to a desktop computer to record a short selfie video. This feature is in an experimental beta phase and is currently available in select countries. Following these steps is crucial to protect your digital identity from deepfake-related misuse.

To get started, open YouTube Studio on your desktop, go to the Content Detection tab, choose the Likeness option, and click Start now.

How to Submit Your Biometric Data

Once you’ve met the requirements, you can move on to submitting your biometric data. After selecting Start now, review and agree to YouTube’s terms for using biometric data. Then, upload a clear photo of your government-issued ID and record a selfie video. This video is used to create a unique facial template that YouTube will compare against newly uploaded videos.

The verification process typically takes up to five days. During this time, YouTube will review your ID and biometric data to confirm your identity. Once approved, you’ll receive a confirmation email, and the system will begin scanning new uploads for potential matches. Your facial template, legal name, and ID will be securely stored for up to three years from your last sign-in unless you decide to opt out. If your channel features multiple individuals on camera, each person must complete their own enrollment separately.

What to Do After Detection: Reviewing and Taking Action

YouTube's detection tool not only flags potential deepfake content but also gives you the tools to respond quickly and safeguard your digital identity.

How to View Detected Content

Once flagged, these videos will appear in your review queue. To access them, head to YouTube Studio and navigate to Content Detection > Likeness > For Review. Here, you'll find a list of videos where your face might have been altered or generated by AI.

You can use filters to organize the videos by metrics like view count or the number of channel subscribers. To examine a specific video, click Review next to it. If you're not ready to act, you can archive the video for later.

While the tool primarily identifies visual facial matches, it’s not foolproof. If you suspect a deepfake that wasn’t flagged, you can manually report it using YouTube's standard privacy complaint process.

Once you've reviewed the flagged content, you can decide how to address any misuse.

How to Report Deepfake Misuse

After reviewing, you can take action on flagged videos by:

- Filing a removal request if the video uses your face without permission.

- Submitting a copyright removal request if the video includes your original footage.

- Archiving the video if it’s authorized or falls under fair use.

"Likeness detection helps creators find content on YouTube where their face appears to be altered or generated by AI. If any relevant content is found, creators can review and decide what to do, including requesting removal through the privacy complaint process." - YouTube Support

When you file a removal request, YouTube reviewers will consider factors like fair use, parody, or news reporting before making a decision. If the privacy complaint is valid, YouTube gives the uploader 48 hours to either delete or edit the video. You’ll also receive a confirmation email with a retraction link in case you change your mind.

Keep in mind that YouTube doesn’t automatically remove all reported content. Reviewers evaluate whether the video involves public figures, newsworthy content, or other protected forms of expression. As Ryan Whitwam, Senior Technology Reporter at Ars Technica, observed:

"Popular creators may have to begin filing AI likeness complaints as regularly as they do DMCA takedowns".

YouTube's Complete Anti-Deepfake Strategy

YouTube has implemented a layered defense system to tackle deepfake abuse, going far beyond its Likeness Detection tool. This approach combines mandatory disclosure rules, advanced detection technologies, and collaboration across the industry to safeguard creators and maintain trust on the platform.

One key measure is the mandatory disclosure of realistic synthetic content. Creators must clearly indicate if their videos contain AI-generated elements, such as synthetic faces, voices, or altered events. During the upload process, YouTube Studio prompts creators to check "Yes" under the "Altered content" setting for such media. For sensitive topics like elections, public health, or government officials, YouTube adds a prominent label to the video, while other synthetic content is flagged in the description. This complements the Likeness Detection tool by addressing other forms of AI-driven content manipulation.

YouTube also targets deepfakes in other media formats. For example, the platform has developed Synthetic-Singing Identification, a tool within Content ID that identifies AI-generated vocals mimicking artists' voices. This allows music labels and distributors to flag and manage these AI clones through the Content Detection tab. Additionally, YouTube employs AI classifiers and over 20,000 human reviewers to identify emerging abuse patterns and enhance moderation efficiency. As Jennifer Flannery O'Connor and Emily Moxley, Vice Presidents of Product Management at YouTube, noted:

"AI is continuously increasing both the speed and accuracy of our content moderation systems."

To prevent creators' content from being misused for AI training, YouTube employs technical measures to detect and block unauthorized third-party access to videos. It also adheres to C2PA standards (Coalition for Content Provenance and Authenticity) and uses tools like Google DeepMind's SynthID to watermark AI-generated images and audio. This creates a tamper-evident digital trail, ensuring content authenticity. YouTube is a steering member of C2PA, collaborating with Adobe, Microsoft, and OpenAI.

Creators who fail to disclose synthetic content face serious consequences, including content removal, suspension from the YouTube Partner Program, or other penalties. With over 500 hours of video uploaded every minute, these automated systems are critical for managing deepfake challenges at scale.

Privacy and Data Security Concerns

YouTube's tools for detecting deepfakes are designed to protect creators, but the use of biometric data comes with its own set of privacy concerns. Handling sensitive information like government IDs and selfie videos requires robust security measures.

YouTube has clarified that biometric data collected - such as your ID and selfie video - is used exclusively for identity verification and supporting its likeness detection tool. As YouTube spokesperson Jack Malon explained:

"The data provided for the likeness detection tool is only used for identity verification purposes and to power this specific safety feature".

However, privacy advocates have raised concerns about the broader implications. They point to Google's privacy policy, which could potentially allow biometric data to be used for training AI models like Gemini or Search. Dan Neely, CEO of Vermillio, cautioned:

"As Google races to compete in AI and training data becomes strategic gold, creators need to think carefully about whether they want their face controlled by a platform rather than owned by themselves".

In response, YouTube has emphasized that biometric data collected through this tool has never been used to train AI models. To address concerns, the platform is revising its sign-up language to make data handling practices clearer. Importantly, participation in this feature remains optional, and by January 2026, over 3,000,000 creators are expected to have access to the tool.

Legal experts also highlight the risks tied to biometric data. Since biometric identifiers, like facial features, are permanent, a breach could lead to misuse, including the creation of synthetic content. Luke Arrigoni, CEO of Loti, warned:

"Linking names to facial biometrics risks misuse".

This underscores the importance of YouTube's responsibility in managing such sensitive information. While the platform's measures aim to protect creators from deepfakes, they also reflect the need for strong data security in an era dominated by AI advancements.

Conclusion

YouTube is stepping up to help creators protect their identity and content from unauthorized AI use. Their likeness detection tool scans newly uploaded videos for potential misuse, while features like disclosure labels for synthetic content and synthetic-singing identification for music partners aim to increase transparency and build trust across the platform.

Getting started is straightforward: verify your identity through YouTube Studio and keep an eye on the Content Detection tab. As Amjad Hanif, Vice President of Creator Products at YouTube, put it:

"The technology is designed to scale enforcement so creators don't have to play whack‑a‑mole on their own."

By January 2026, over 3,000,000 creators are expected to benefit from these tools, signaling a shift toward large-scale, automated protection. This initiative underscores YouTube's dedication to safeguarding your identity and content.

In a time when your digital presence is more valuable than ever, these tools aren't just about taking down fake videos - they're about preserving the trust you've built with your audience. If you're part of the YouTube Partner Program, take the step today to verify your identity and secure your digital presence.

FAQs

Will this detect deepfakes using my voice but not my face?

YouTube uses AI-powered detection tools to spot deepfakes that feature your voice, even if your face isn't shown. These tools are designed to safeguard creators' identities and content from being misused.

What happens if the tool flags a video as parody or fair use?

YouTube may still review videos flagged by the tool if they fall under parody or fair use. The platform is also developing technologies to help creators identify and disclose altered or synthetic content. Any flagged videos undergo a review process to ensure they align with YouTube's policies.

Can I enroll without providing a government ID, and can I delete my data later?

To sign up for YouTube's likeness detection system, you'll need to submit a government-issued ID along with a brief video of your face. Google might use this biometric information to train its AI systems. However, questions remain about whether users can remove their data later, sparking concerns about privacy and control.

Related posts

LongStories is constantly evolving as it finds its product-market fit. Features, pricing, and offerings are continuously being refined and updated. The information in this blog post reflects our understanding at the time of writing. Please always check LongStories.ai for the latest information about our products, features, and pricing, or contact us directly for the most current details.